Neuropower Tutorial

Getting Started

Pilot data

This toolbox is based on an unthresholded statistical map from pilot data. There's different sources for pilot data. Here are some options.

- Collecting a pilot dataset. Yes, collecting pilot data is expensive. Yet it is your best option for a power analysis.

- Open data. If you don't have the resources to collect pilot data, there's a lot of people sharing their data. NeuroVault is a good source for statistical maps! Try to find an experiment with a comparable study design as yours and you have a good proxy for pilot data.

- Previously collected data. You or your colleagues might have data lying around with an experimental setup comparable to your new study. You can use these data. And while you're at it, why not share them online?

Responsible research practices

With too small sample sizes, true effects can be missed, the magnitude of statistically significant effects is exaggerated, and significant findings are not likely to replicate. As such, a power analysis is a crucial step in a powerful and reproducible neuroimaging study. However, power analyses can be misused for questionable research practices. Here's what you shouldn't use our toolbox for.No Data Peeking

Imagine you collect 10 subjects and you perform an analysis. You see two possibilities:

- You find the significant effects you were hypothesising:

Woohoo! This means I can stop my experiment, write my paper and publish my findings. - Not everything you expected is significant, but there is a trend:

You decide to add a few more subjects and then look at the data again. To decide how many more subjects you'll need, you use our toolbox.

THAT IS NOT A GOOD USE OF THIS TOOLBOX. This is a practice referred to as data peeking, or conditional stopping. You cannot let your decision to stop or continue depend on the statistical inference. This will inflate your type I error rate. Tal Yarkoni wrote a detailed explanation about why data peeking is so bad.

How to prevent this?

- Don't perform statistical inference on your pilot data and don't re-use your data.

- There are ways to correct for the conditional stopping. However, while this practice protects your false positive rate, it will decrease your overall power and therefore the reproducibility of your study. We do not recommend this approach.

Do not re-use your pilot data

Even if you don't perform statistical inference on the pilot data, the pilot data should be independent from the final data. Why? Re-using data will still increase your overall type I error rate. You can find more details in Jeanette Mumford's guide for power calculations. We are working on a method that will allow you to re-use your data (without inference still!). We'll present it on OHBM2016.

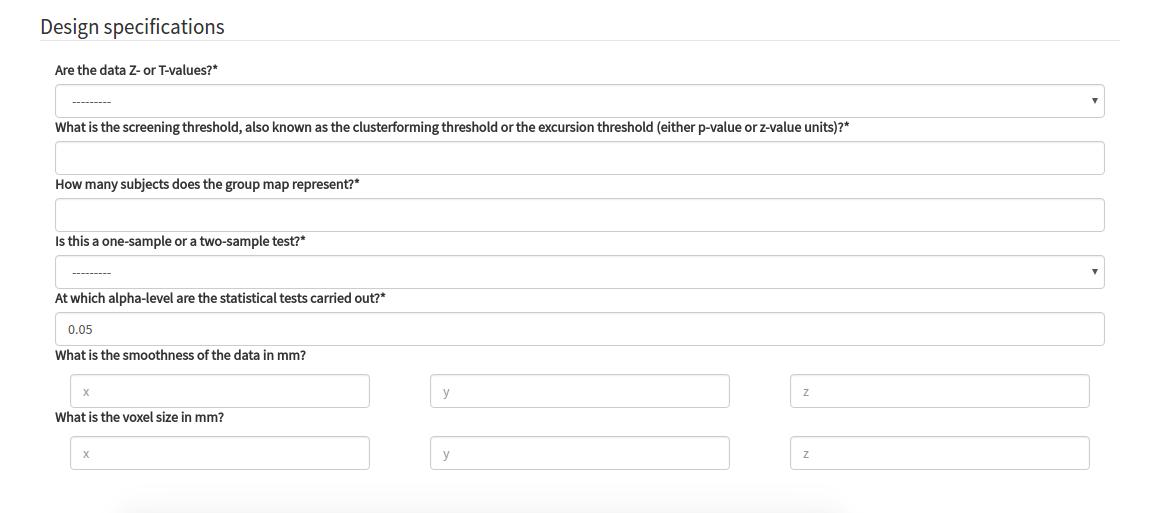

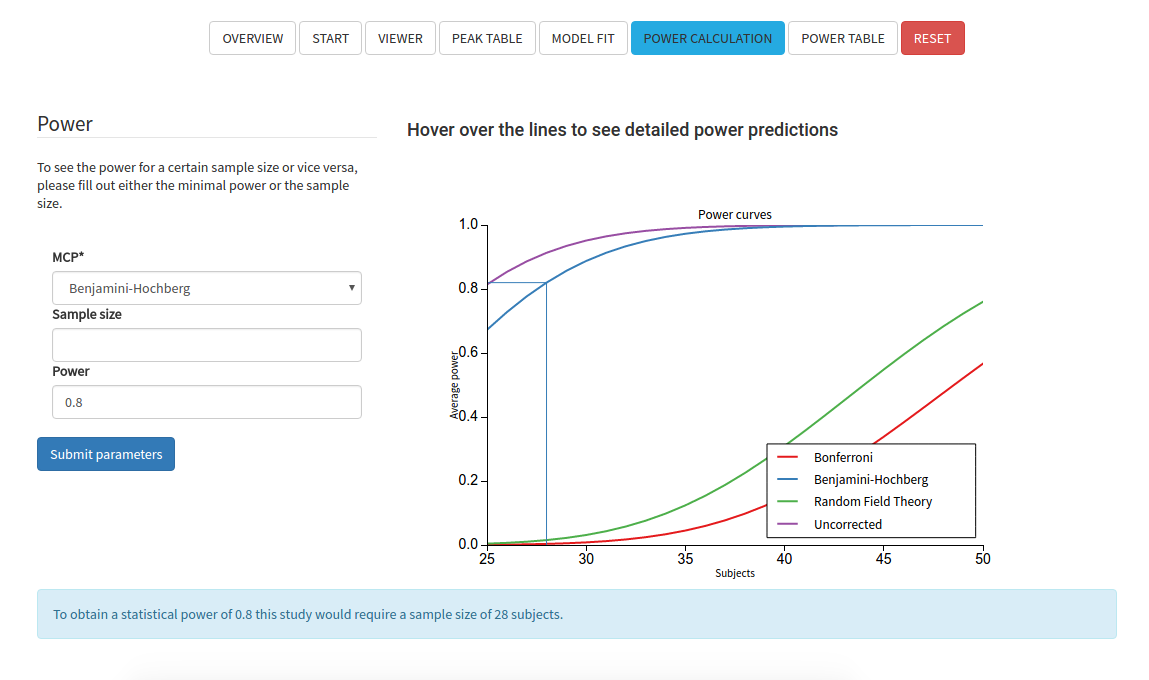

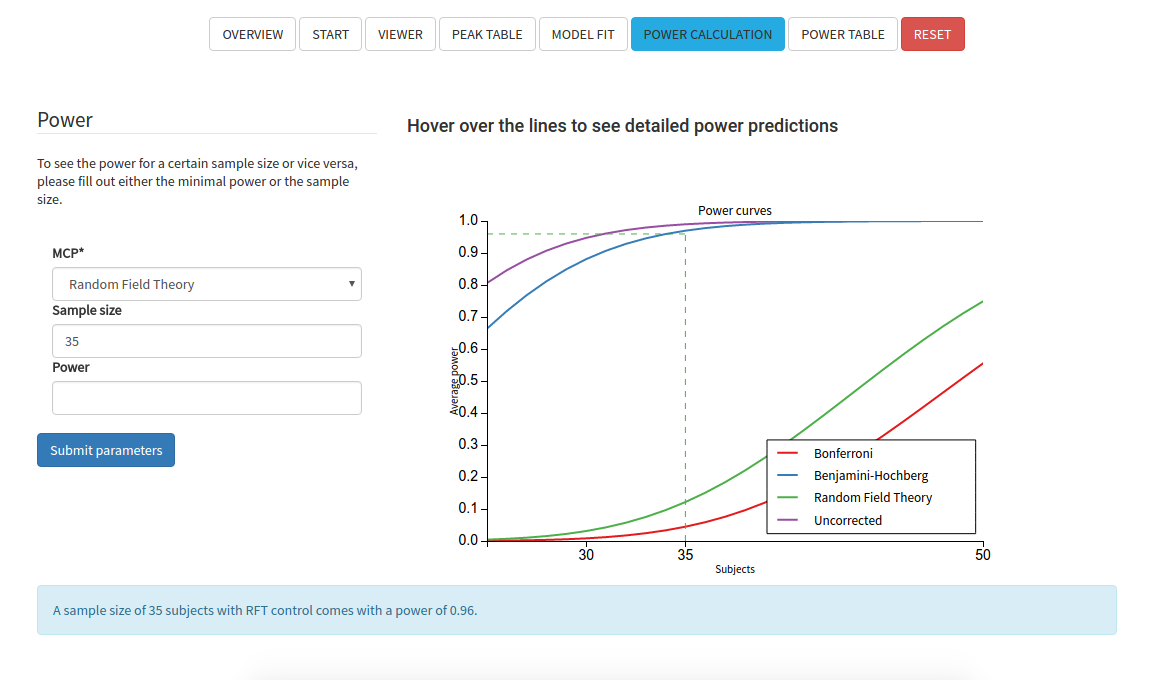

Conditional power

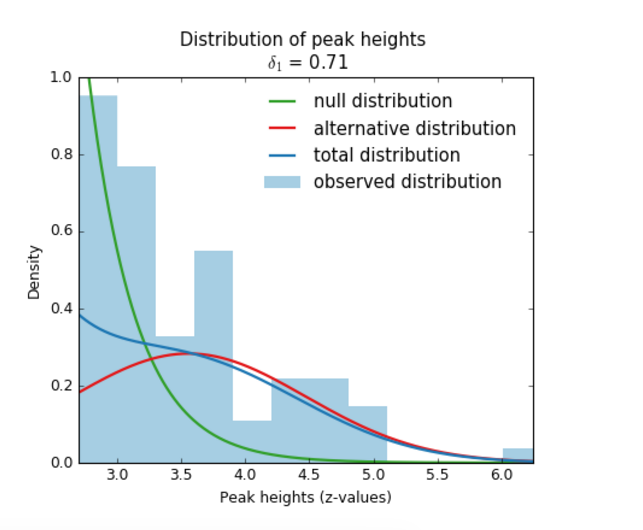

It is important to note here that peakwise as well as clusterwise analysis use a screening threshold (also known as the clusterforming threshold or the excursion threshold). This means that only voxels above this threshold are considered for significance testing. SPM uses a default screening threshold of p<0.001 (only voxels with p-values smaller than 0.001 are retained for further analysis). FSL uses a default screening threshold of Z>2.3 (only voxels with z-values higher than 2.3 are used). It is important to know that our measure of power is conditional, which means that our measure of power computes the average chance of detecting an active peak for all peaks above the screening threshold. All activity below the screening threshold is ignored. As a consequence, the power estimate cannot be reported independent of the screening threshold.

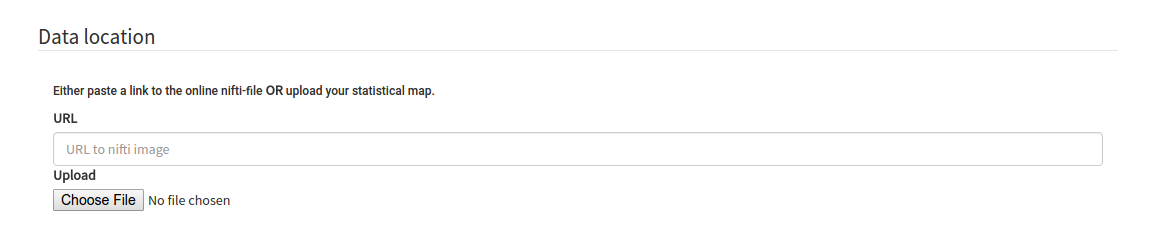

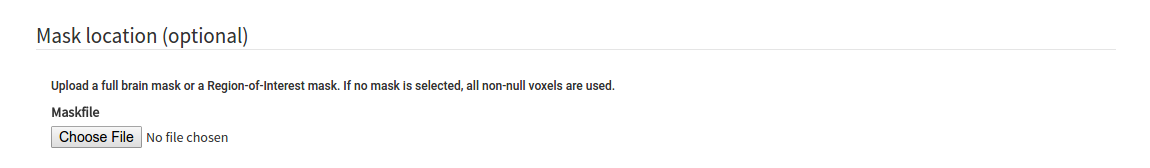

Next: Data Input